A recent article (Hamrang, et al. Trends in Biotechnology, 2013) made me think about the impact modern technology is having on how scientific research is developing and, in particular, my own experience of applying some of this technology. I thought it might be interesting to detail some of this technology and how it has influenced my own research and how it might both develop to provide new approaches for the advancement of science and how this will change requirements in teaching.

A good place to start is SINGLE MOLECULE ANALYSIS a concept I had never thought of in my early research career, but it became a possibility during the 1990s. The first time I heard of single molecule analysis was something called a Scanning Tunnelling Microscope, but I could not see uses for this device outside of chemistry as the objects to be visualised were in a vacuum. However, this device quickly developed into the Atomic Force Microscope (AFM) and the study of biological molecules was soon underway. This device measures surface topology and can visualise large proteins as single molecules – my first involvement was to visualise DNA molecules

A good place to start is SINGLE MOLECULE ANALYSIS a concept I had never thought of in my early research career, but it became a possibility during the 1990s. The first time I heard of single molecule analysis was something called a Scanning Tunnelling Microscope, but I could not see uses for this device outside of chemistry as the objects to be visualised were in a vacuum. However, this device quickly developed into the Atomic Force Microscope (AFM) and the study of biological molecules was soon underway. This device measures surface topology and can visualise large proteins as single molecules – my first involvement was to visualise DNA molecules  that were being manipulated by a molecular motor. The resolution was astounding, but more importantly we were able to use this technology to study intermediates that had been biochemically “frozen” in position and resolve features we never expected to see. Further studies allowed us to also study protein-protein interactions and super-molecular assembly of the motor. The wonderful thing about this technology is that interpretation of the data has quickly moved from the negativeness of “artefacts” and a lack of faith that images showed what was thought to be there, to a situation where major advancements are possible through direct topology studies. Developments of this technology are likely to include automatic cell identification, in vivo measurements using fine capillary needles and measurements of ligand-surface target interactions on cells – this could influence drug development and biomedical measurements. Another developing technology related to AFM is the multiple tip biosensor that can sense minute amounts of material in a variety of situations (a “molecular sniffer” – one use I heard of directly from the developer was for wine tasting/testing!

that were being manipulated by a molecular motor. The resolution was astounding, but more importantly we were able to use this technology to study intermediates that had been biochemically “frozen” in position and resolve features we never expected to see. Further studies allowed us to also study protein-protein interactions and super-molecular assembly of the motor. The wonderful thing about this technology is that interpretation of the data has quickly moved from the negativeness of “artefacts” and a lack of faith that images showed what was thought to be there, to a situation where major advancements are possible through direct topology studies. Developments of this technology are likely to include automatic cell identification, in vivo measurements using fine capillary needles and measurements of ligand-surface target interactions on cells – this could influence drug development and biomedical measurements. Another developing technology related to AFM is the multiple tip biosensor that can sense minute amounts of material in a variety of situations (a “molecular sniffer” – one use I heard of directly from the developer was for wine tasting/testing!

My second single molecule analysis involved a Magnetic Tweezer setup which is able to visualise movement of a magnetic bead attached to a single molecule (in our case DNA), which allowed us to determine how a molecular motor moves DNA through the bound complex, but, perhaps more importantly, this led us to develop a biosensor based around this technology that could be used to determine drug-target interactions at the single molecule level and perhaps allow single molecule sensing in anti-cancer drug discovery. This technology is also closely related to optical tweezer systems that have been used in similar studies and the future is certain to make such technology cheaper and easier to use and their application in biomedical research. The key to this development will be the increased sensitivity of single molecule studies and how this will enable more detailed understanding of intermediate steps in molecular motion induced by biomolecules. I imagine as newer versions of these devices become more automated, then they will be used as biosensors to study more complex systems that involve molecular motion. In the short term, it seems to me that there is scope for the application of these devices in understanding protein amyloid formation and stability with a view to determining mechanisms for destabilising such structures.

My second single molecule analysis involved a Magnetic Tweezer setup which is able to visualise movement of a magnetic bead attached to a single molecule (in our case DNA), which allowed us to determine how a molecular motor moves DNA through the bound complex, but, perhaps more importantly, this led us to develop a biosensor based around this technology that could be used to determine drug-target interactions at the single molecule level and perhaps allow single molecule sensing in anti-cancer drug discovery. This technology is also closely related to optical tweezer systems that have been used in similar studies and the future is certain to make such technology cheaper and easier to use and their application in biomedical research. The key to this development will be the increased sensitivity of single molecule studies and how this will enable more detailed understanding of intermediate steps in molecular motion induced by biomolecules. I imagine as newer versions of these devices become more automated, then they will be used as biosensors to study more complex systems that involve molecular motion. In the short term, it seems to me that there is scope for the application of these devices in understanding protein amyloid formation and stability with a view to determining mechanisms for destabilising such structures.

SURFACE ATTACHED BIOMOLECULAR ANALYSIS.

The best known system in this category of analytical devices is Biacore’s Surface Plasmon Resonance (SPR), which uses a mass detection mechanism based on changes to the Plasmon effect produced by electrons in a thin layer of gold. We have used this to study protein-DNA interactions and subunit assembly and the technique provides a useful confirmation of older techniques such as electrophoresis. I have been involved in discussions about the application of this technology in the field, but reliability and setup problems remain a problem. In comparison, the Farfield dual beam interferometer can use homemade chips that simplify setup and seems more reliable for similar measurements. Where I see a potential for these devices is in the study of protein aggregation, which has tremendous potential in the study of amyloid-based diseases. This idea sprang from discussions with Farfield about using their interferometer to detect crystallization and would be an interesting project. However, if these devices are to have a major impact in biomedical sciences, they need to be easier to setup, more reliable and smaller. recent advances are leading SPR toward single molecule sensing (Punj, D., et al., Nat Nano, 2013). I believe the real key to implementing this technology as a biosensor is to incorporate two technologies in the same device. We proposed to have a dynamic system, on an interferometer chip, whose activity would switch off the interferometre when active. This could be used in drug discovery, targeting the drug at two systems simultaneously. If massively parallel systems can be developed, possibly based around laminar-flow, I can see a use in molecular detection of hazardous molecules using either antibodies, or aptamers.

The best known system in this category of analytical devices is Biacore’s Surface Plasmon Resonance (SPR), which uses a mass detection mechanism based on changes to the Plasmon effect produced by electrons in a thin layer of gold. We have used this to study protein-DNA interactions and subunit assembly and the technique provides a useful confirmation of older techniques such as electrophoresis. I have been involved in discussions about the application of this technology in the field, but reliability and setup problems remain a problem. In comparison, the Farfield dual beam interferometer can use homemade chips that simplify setup and seems more reliable for similar measurements. Where I see a potential for these devices is in the study of protein aggregation, which has tremendous potential in the study of amyloid-based diseases. This idea sprang from discussions with Farfield about using their interferometer to detect crystallization and would be an interesting project. However, if these devices are to have a major impact in biomedical sciences, they need to be easier to setup, more reliable and smaller. recent advances are leading SPR toward single molecule sensing (Punj, D., et al., Nat Nano, 2013). I believe the real key to implementing this technology as a biosensor is to incorporate two technologies in the same device. We proposed to have a dynamic system, on an interferometer chip, whose activity would switch off the interferometre when active. This could be used in drug discovery, targeting the drug at two systems simultaneously. If massively parallel systems can be developed, possibly based around laminar-flow, I can see a use in molecular detection of hazardous molecules using either antibodies, or aptamers.

COMPUTER BASED IMAGE ANALYSIS.

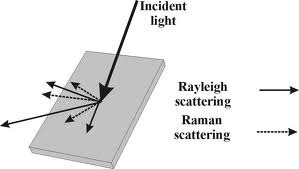

I have not directly used this technology, but I have seen the results applied to the molecular motor that I have worked with. The value of the system is that cyroEM allows the gathering of many images of a large protein complex, which allows structural studies of systems that cannot be crystallized or visualized using NMR. My feeling is that as computing power increases this technique combined with molecular modelling in silico, will provide structural information for many complex biological systems. The impact of this knowledge will greatly influence the design of drugs and will aid the biochemical analysis of complex systems. My feeling is that further development of this technology will revolve around combining it with other techniques for visualising biomolecules, one I have mentioned before is Raman Spectroscopy, which could allow studies of these complexes in situ another could be single molecule fluorescence (Grohmann, et al. Current Opinion in Chemical Biology). I can easily imagine collaborative research projects that will bring a variety of such techniques to the production of the 3D image of real biological systems isolated from cells. Such research would have to follow existing models of bidding to use such equipment in centres of excellence. Such centres would bring together visualization techniques with single molecule analysis and data from genomics and proteomics. The research lab of the future will depend on much more international collaboration than we have seen up to now!

I have not directly used this technology, but I have seen the results applied to the molecular motor that I have worked with. The value of the system is that cyroEM allows the gathering of many images of a large protein complex, which allows structural studies of systems that cannot be crystallized or visualized using NMR. My feeling is that as computing power increases this technique combined with molecular modelling in silico, will provide structural information for many complex biological systems. The impact of this knowledge will greatly influence the design of drugs and will aid the biochemical analysis of complex systems. My feeling is that further development of this technology will revolve around combining it with other techniques for visualising biomolecules, one I have mentioned before is Raman Spectroscopy, which could allow studies of these complexes in situ another could be single molecule fluorescence (Grohmann, et al. Current Opinion in Chemical Biology). I can easily imagine collaborative research projects that will bring a variety of such techniques to the production of the 3D image of real biological systems isolated from cells. Such research would have to follow existing models of bidding to use such equipment in centres of excellence. Such centres would bring together visualization techniques with single molecule analysis and data from genomics and proteomics. The research lab of the future will depend on much more international collaboration than we have seen up to now!

STUDIES USING NANOPORES.

The current technology in this area divides into two types of nanopores, physical holes in a surface and reconstituted biological pores. I have used a physical nanopore to investigate the separation of proteins from DNA using electrophoresis across the nanopore, the beauty of this system is that it also quantifies the number of molecules crossing the pore. I imagine that such devices will develop using surface attached biomolecules around the pore, which will introduce specificity into the device, but what I would have liked to develop is a dynamic device for ordered assembly of molecules (an artificial ribosome) where the nanopore allow separation of the assembly line and the drive components – such are the dreams of a retired scientist!

![nanopore_x616[1]](https://firmank.files.wordpress.com/2013/06/nanopore_x6161.jpg?w=293&h=300) Biological nanopores are the main focus for single molecule sequencing of DNA and the future must be portable, personal sequencing devices (DNA sequencing information must reside with the source of the DNA and for humans this will eventually lead to personal devices. However, the level of available data will be enormous and the growth of the “omics” research will require new ways to store, organise and access this information. A new method for studying biological systems is already underway in which analysis of data allows a better understanding of complex systems. This will eventually become a part of biomedicine and will be supported by personalised medicine.

Biological nanopores are the main focus for single molecule sequencing of DNA and the future must be portable, personal sequencing devices (DNA sequencing information must reside with the source of the DNA and for humans this will eventually lead to personal devices. However, the level of available data will be enormous and the growth of the “omics” research will require new ways to store, organise and access this information. A new method for studying biological systems is already underway in which analysis of data allows a better understanding of complex systems. This will eventually become a part of biomedicine and will be supported by personalised medicine.

I was once asked by a student what future Biology holds, and I now know it will be an area of significant growth for many years to come, but this requires the right focus for investment and a new direction for undergraduates in their studies – good luck to those I have taught, who now have to lead these developments.

I was reading an article in Scientific American today that got me thinking about the complexities of biology – the article described the production of a virtual bacteria using computing to model all of the known functions of a simple single cell. The article was a very compelling read and presented a rational argument of how this could be achieved, based on modelling of a single bacterium that would eventually divide. The benefits of a successful approach to achieving this situation are immense for both healthcare and drug development, but the difficulties are equally immense.

I was reading an article in Scientific American today that got me thinking about the complexities of biology – the article described the production of a virtual bacteria using computing to model all of the known functions of a simple single cell. The article was a very compelling read and presented a rational argument of how this could be achieved, based on modelling of a single bacterium that would eventually divide. The benefits of a successful approach to achieving this situation are immense for both healthcare and drug development, but the difficulties are equally immense. The number of copies of a plasmid are tightly controlled in a bacterial cell and this control is usually governed by an upper limit to an encoded RNA or protein, when the concentration of this product drops, such as AFTER division of the cell, replication will occur and the number of plasmids will increase until the correct plasmid number is attained (indicated by the concentration of the controlling gene product).

The number of copies of a plasmid are tightly controlled in a bacterial cell and this control is usually governed by an upper limit to an encoded RNA or protein, when the concentration of this product drops, such as AFTER division of the cell, replication will occur and the number of plasmids will increase until the correct plasmid number is attained (indicated by the concentration of the controlling gene product).

![nanopore_x616[1]](https://firmank.files.wordpress.com/2013/06/nanopore_x6161.jpg?w=293&h=300)

You must be logged in to post a comment.